News & Archive

Deep dives, tutorials, and technical explorations from the ZeroEntropy team.

Zemail: Semantic Gmail Search on Claude Code & Cowork

Zemail is a free Claude Code/Cowork plugin that builds a local semantic index of your Gmail inbox. Keyword search can't find the email you're thinking of. A reranker can.

The Best Embedding Model for Conversational AI in 2026

zembed-1 achieves the highest conversational retrieval benchmark score with a +35% advantage over OpenAI, bridging the gap between informal user queries and formal knowledge bases.

The Best Embedding Model for Finance in 2026: Why zembed-1 Wins

zembed-1 outperforms all benchmarked competitors on finance-domain retrieval, with a 32k context window, flexible compression, and Elo-calibrated relevance for regulatory compliance, earnings analysis, and investment research.

The Best Embedding Model for Code in 2026: zembed-1 Tops the Leaderboard

zembed-1 achieves the highest benchmark score of any embedding model in the code domain at 0.6452 NDCG@10, while simultaneously leading every other domain -- the only model that is best-in-class everywhere.

The Best Embedding Model for Healthcare in 2026: zembed-1 Leads the Field

zembed-1 achieves 0.6260 NDCG@10 on healthcare retrieval benchmarks, leading competitors by up to +31.8%, with multilingual support, 32k context, and self-hosting for HIPAA compliance.

The Best Embedding Model for Legal in 2026: zembed-1 Sets the Standard

zembed-1 achieves 0.6723 NDCG@10 on legal retrieval benchmarks, outperforming all competitors by up to +31.8%, with Elo-calibrated relevance, 32k context, and quantization for massive legal corpora.

The Best Embedding Model for Manufacturing in 2026

zembed-1 leads manufacturing domain benchmarks with a +12.9% advantage over the nearest competitor, powering industrial AI retrieval for maintenance, quality, and engineering applications.

The Best Embedding Model for RAG and Enterprise Search in 2026

zembed-1 achieves the highest NDCG@10 on MSMARCO and leads every domain-specific benchmark, making it the ideal foundation for enterprise RAG systems.

The Best Embedding Model for STEM and Mathematics in 2026

zembed-1 leads STEM and mathematics retrieval benchmarks, outperforming OpenAI by 35% and all competitors in scientific document search.

The Best Embedding Model of 2026: Why zembed-1 Is in a Class of Its Own

zembed-1 is the first embedding model to lead every domain benchmark simultaneously — finance, healthcare, legal, manufacturing, code, STEM, and conversational — while topping MSMARCO at 0.946 NDCG@10.

The Best Multilingual Embedding Model in 2026: zembed-1 Was Built for the World

zembed-1 was built with over 50% non-English training data, delivering true multilingual parity and cross-lingual retrieval across all major world languages with 0.946 NDCG@10 on MSMARCO.

harrier-27b: Can 27B Parameters Beat zembed-1?

A head-to-head evaluation of ZeroEntropy's zembed-1 against all three Harrier embedding models across 24 diverse datasets, using graded LLM relevance judgments.

Smarter Context Compression for LLM Pipelines: zerank-2 as a Calibrated Classifier

How to use zerank-2's calibrated relevance scores as a binary classifier for context compression, document routing, and multi-label classification — at 50-100x less cost than LLM classification.

Beyond Binary: A New Version of the MTEB

We re-annotated 24 MTEB datasets with LLM pointwise scoring on a 0-10 scale using three independent judges. With graded relevance, the retrieval leaderboard looks meaningfully different.

Bi-Encoders vs Cross-Encoders

Why production search uses two models, not one — and what happens when you pair them.

zembed-1 vs voyage-4

A thorough evaluation comparing the performance of ZeroEntropy's zembed-1 and Voyage's latest voyage-4 embedding models.

"Let's eat, grandma" vs "let's eat grandma": how embedding models encode the world

A deep dive into how embedding models encode meaning, why famous training examples create the illusion of capability, and what consistent behavior across 10k+ nouns tells us about genuine understanding.

Introducing zembed-1: The World's Best Text-Embedding Model

Introducing zembed-1: The World's Best Text-Embedding Model

2026's Top 10 Embedding Companies Powering Search Technology

Best Embedding Model for Legal Document Search in 2026

The Geometric Limitations of Vector Embeddings in Retrieval Systems

How Assembled Powers High-Quality AI Customer Support with ZeroEntropy

After integrating ZeroEntropy's reranking into their retrieval pipeline and validating it on live production traffic, Assembled migrated to 100% of their reranking volume through ZeroEntropy.

Prompting Best Practices For Instruction-Following Rerankers

In this guide, we will go over best practices around prompting a reranker for state-of-the-art relevance.

Open-source alternatives to Cohere Rerank in 2026

Learn about the open-source and open-weight rerankers to use as an alternative to closed-source models.

Latency Performance Assessment of zerank-2

In this blog, we go over the latency performance of ZeroEntropy's latest reranker model zerank-2.

Introducing zerank-2: The Most Accurate Multilingual Instruction-Following Reranker

zerank-2 is ZeroEntropy's latest multilingual cross-encoder model, tuned for instruction-following, and score calibration.

The Latency Myth: Why Reranking Is Still the Smartest Optimization You Can Make

Yes, technically, rerankers add a layer to your search pipeline. Yet, reranking improves both efficiency and quality once you consider the full retrieval-generation loop.

Context Engineering Webinar: Everything You Missed

Thanks to everyone who joined our first Context Engineering Webinar!

How Vera Health Achieved State-of-the-Art Clinical Accuracy Using ZeroEntropy

Vera Health sets a new accuracy record on medical question answering using ZeroEntropy Search and Rerank APIs.

Equall Improves Legal Document Structuring and Retrieval Accuracy with ZeroEntropy

ZeroEntropy's reranker consistently outperformed every alternative we tested — recall@10, recall@5, across datasets. It's fast, stable, and a no-brainer for legal document pipelines.

Implementing ZeroEntropy Reranking with turbopuffer Retrieval

Learn how to implement a two-step search pipeline for fast and accurate retrieval, using turbopuffer and ZeroEntropy.

Paper TLDR: How we trained zerank-1 with the zELO method

This is a short TLDR summarizing the main concepts in the zELO paper. We describe the training approach of ZeroEntropy's reranker zerank-1.

Mem0 Improves Memory Retrieval Accuracy with ZeroEntropy

Mem0 migrated their production rerank traffic to ZeroEntropy's zerank-1, a critical component of their retrieval stack.

On The Geometric Limit of Dense Single Vector Embeddings

Learn about why embeddings are usually not enough in a search pipeline, and how you can fix some of the most common pitfalls embeddings have.

Should You Use LLMs for Reranking? A Deep Dive into Pointwise, Listwise, and Cross-Encoders

Are LLMs cheap, calibrated, and fast enough for reranking tasks? How should you select the best results from a candidate set of k=100, or even k=200? We go over the pros and cons of using LLMs as rerankers and what the best approach is.

My AskAI Improves Support Agent Latency and Accuracy with ZeroEntropy

My AskAI replaced its existing reranker with ZeroEntropy's zerank‑1 across production traffic. Results: faster responses at scale, a measurable lift in answer quality, and lower cost.

Ultimate Guide to Choosing the Best Reranking Model in 2026

How to pick a reranker model for your RAG stack in 2025?

How to Do RAG with Mastra and ZeroEntropy

Building Production RAG with Mastra and ZeroEntropy Reranking

Why Evaluation Metrics for Reranking Matter in Search Quality

Learn why evaluation metrics for reranking are crucial in improving search engine performance. Discover how they ensure more accurate and relevant search results.

ZeroEntropy's zerank-1 vs. Jina AI's jina-reranker-m0

How does Jina AI's jina-reranker-m0 compare to ZeroEntropy's zerank-1? In this blog post, we compare both on the latency, accuracy, and pricing standpoints.

Best Reranker for HR Knowledge Bases

Automate employee Q&A, HR policy lookups, job search and match, with smarter reranking.

Latency Benchmark: Cohere rerank 3.5 vs. ZeroEntropy zerank-1

zerank-1 delivers the fastest, most accurate reranking for AI search—up to 14× faster than Jina and 12% faster than Cohere. Try it via API or Hugging Face.

2-Norm Vector

Understanding 2-Norm Vectors in Vector Similarity Calculations

Should You Use an LLM as a Reranker? Pros, Cons, and Benchmarks

Explore whether using an LLM as a reranker makes sense — with tradeoffs, latency impacts, and real-world data.

Best Reranker for Healthcare AI

Boost clinical search accuracy and patient data retrieval with domain-specific reranking.

Best Reranker for Legal Document Search

Transform legal research workflows with intelligent, explainable rerankers.

Announcing ZeroEntropy's First Rerankers: zerank-1 and zerank-1-small

Meet zerank-1: ZeroEntropy's powerful new reranker, outperforming Cohere and Gemini with up to 28% higher precision—now live via API and Hugging Face.

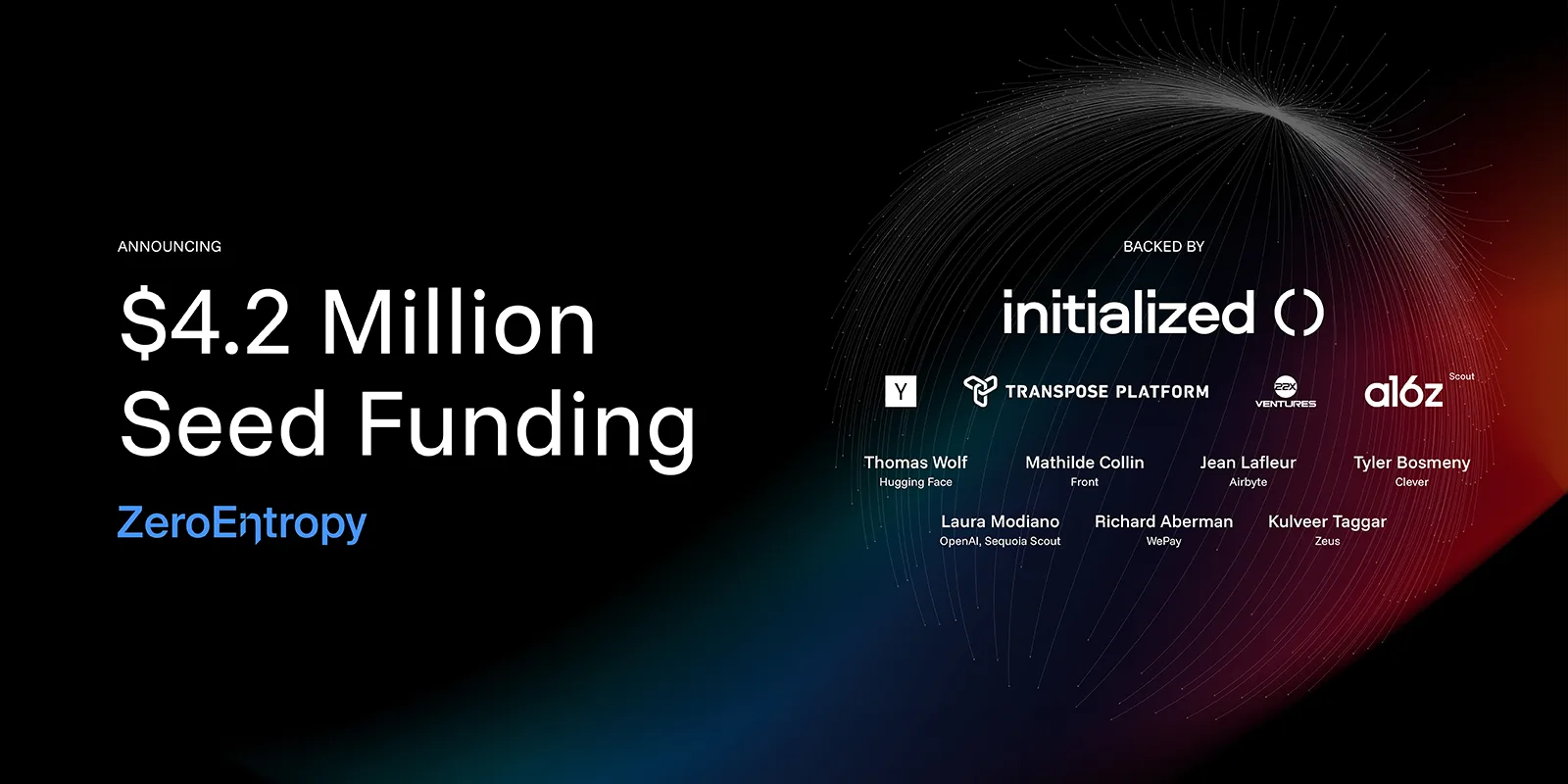

ZeroEntropy Raises $4.2M Seed Round to Make AI Retrieval Truly Intelligent

ZeroEntropy raises $4.2M to reinvent AI retrieval—enabling lightning-fast, accurate search across unstructured data. Backed by YC, Initialized & more.

Improving Retrieval with ELO Scores

ZeroEntropy uses chess-inspired ELO scores to train the next-gen reranker—boosting accuracy with nuanced, scalable relevance scoring. Try zerank-1 today.

What is a reranker and do I need one?

Learn what a reranker is and how it boosts precision in RAG pipelines—surface the right results faster, reduce hallucinations, and improve LLM output quality.

Deep Dive: The Architecture of ZeroEntropy v1

Discover how ZeroEntropy v1 blends BM25, dense embeddings, and LLMs for lightning-fast, accurate hybrid search over unstructured documents.

AGI requires better retrieval, not just better LLMs

AGI needs more than LLMs—it needs smarter retrieval. Learn how to identify failure modes in RAG and evaluate search accuracy with ZeroEntropy's benchmarks.

LlamaChunk: A General and Cost Efficient Approach to Semantic Chunking

Learn how LlamaChunk delivers fast, accurate semantic chunking for RAG—outperforming regex and embedding methods with LLM-guided document splitting.

LegalBench-RAG, the First Open-Source Retrieval Benchmark for the Legal Domain

LegalBench-RAG is the first open-source benchmark for legal RAG retrieval—6,800+ queries, 79M+ characters, human-annotated spans. Evaluate legal AI today.